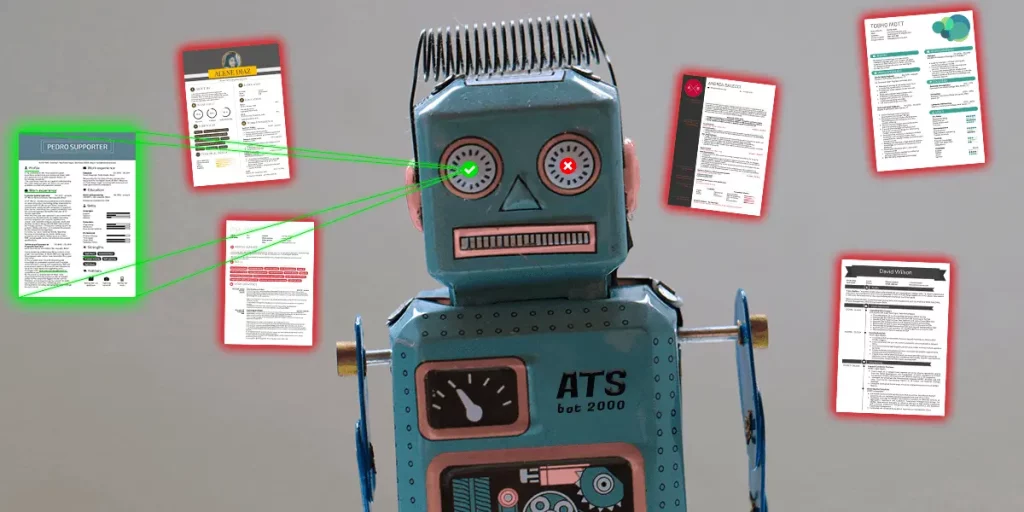

If you've ever been looking for a job, you've probably heard about Applicant Tracking Systems (ATS). These programs are designed to process a huge number of resumes for each corporate job offer and narrow them down to about a dozen.

This means that only a tiny fraction of resumes does ever get read.

Of course, no one wants to job search in vain just because a machine thinks they're not a good fit. Ultimately, however, it all depends on you and the content of your resume.

The good news is that you can get your resume past most ATSs with a few simple tricks. Once you understand the inner workings of these systems, you'll be able to redesign your resume and finally get the edge you need to score that highly-coveted job.

Not all ATSs were created equal

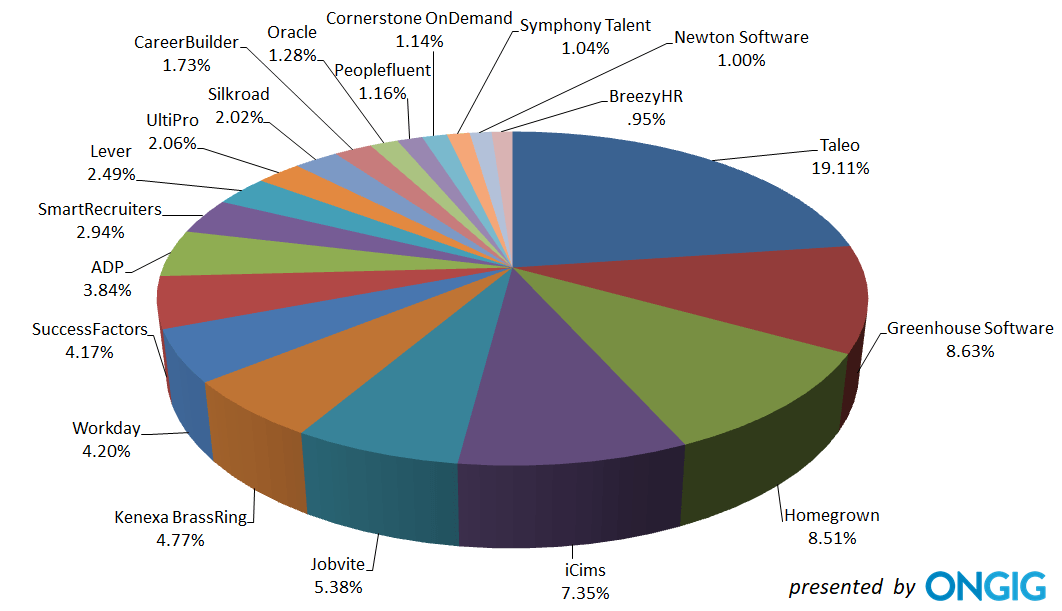

The main problem is that there are way too many applicant tracking systems that companies use. Here's a pie chart that shows the ATS market share of the top 20 ATS’s.

Courtesy of ONGIG

You may try hard to tailor your resume to the most prominent ATSs like Taleo and Greenhouse. Still, there's only a small chance that your target company uses either of them.

What's more, many top global employers (including Google, Facebook, Apple, Starwood or Wyndham) have been developing their own in-house ATSs with unknowable quirks. The pie chart calls them "homegrown".

The message is clear — it's impossible to optimize your resume for every single ATS. On the other hand, all ATSs are built around a set of pre-given principles that come from data science. And that's something you can use to our advantage to effectively beat any ATS.

Keep it simple and use common sense

We'll probably never know how exactly ATS resume screenings work. On the other hand, there are several things that we do now. And you can work with that.

Given that the language is an extremely complicated system, programmers look for easy workarounds. In the end, they're able to filter out the most relevant information for the given company.

When it comes to resume writing, try to keep things as simple as possible. Your goal, after all, is to have your resume evaluated by a human. If you overdo it with an overly complex formatting, the ATS may struggle to decipher your beautifully designed resume and disqualify it. (By the way, Kickresume templates are ATS proof. Just saying.)

Also, try to think of specific elements that hiring managers might want to see on a resume. Try to predict the ways they want their ATS to behave. Here's a couple of tips on how you can model your resume accordingly:

1. Geography

Most companies want to hire locally to avoid paying relocation bonuses.

That's why they make ATS parse out your address. Higher priority is assigned to addresses closer to where they're located.

The ATS then compares your address to the job in question and rates you according to the proximity. The system is also likely to prioritize the address associated with your last job over the first listed address on your resume.

What can you do with this information?

First, make sure the city you listed on your resume is the same as the city in the job posting. Follow conventions and use City, XX (a two-letter state abbreviation) to make it easier for the ATS to parse the location. To match the locations, list larger general metropolitan areas. If you want a Queens job but you live in Brooklyn, use New York City as your address.

Still, there are situations when you should consider not include location in your work experience section. For example, if you relocated a lot over the past few years, if your last job was located outside of your current city, or if you live on a street with a confusing name (such as Paris Street or Madrid Avenue) that may make a poorly designed ATS think you’re from other part of the world and need a visa.

2. Skills

One of the first things companies check for is whether the applicants have all the skills necessary for the job.

Now, an ATS usually tries to assess the relative time value of your skills. If you worked with Java on a job you started 10 years ago, the system credits you with 10 years of Java experience.

The similarity between your resume and the job description is something that can be measured, too. If you fail to use mandatory keywords or don't use them frequently enough throughout your resume, your chances of passing the ATS screening is extremely low.

When designing your resume, make sure you've included the most important keywords from the job description and put them near the top. On the other hand, if you already know the company doesn't use an ATS to screen your resume, it's better to leave your technical skills at the bottom.

Lastly, make sure your work experience section has a straightforward formatting layout. A logical and clear resume design ensures that an ATS won't struggle to find the details it seeks.

3. Education

Companies like to look at a job seekers' academic background to see their potential.

That's why the ATS normally uses school rankings to assess candidates. If you're still at school, the conventional email .edu extension makes it easy for the parser to extract the school and include the information in the dashboard summary of candidates.

In other words, if you still have your university email address, use it to associate yourself with the school's reputation. Make sure to include the full name of the school together with its abbreviation, e.g. Massachusetts Institute of Technology (MIT).

However, if your academic background is not something you can be really proud of, get an online certificate from one of the top universities like Harvard or Yale. Then you'll be able to use the school's name to your advantage.

Your path to success

Recruiters love smart applicant tracking systems because they make their jobs easier. Unfortunately, some of them are poorly designed.

Of course, we'll never know how exactly ATSs work. On the other hand, you can always try to predict what HR managers want them to do. These systems are capable of assessing your relevant work experience, academic background and technical skills with varying degree of accuracy.

If you want to boost your chances of scoring a job, you need to adopt the hiring manager's point of view and make your resume align with their vision.

This method will finally help you come to grips with the hiring process and prevent the ATS from turning your resume into a statistic. Instead, you'll be able to come up with a simple, high-quality document that can use for multiple openings without making any critical changes.

Isn't it great to have the inside track on crafting an ATS-friendly resume? Why not keep that momentum going by exploring our comprehensive collection of resume samples?